After several talks at work about the feasibility of using AWS Codebuild and AWS Codepipeline to verify the integrity of our codebase, I decided to give it a try.

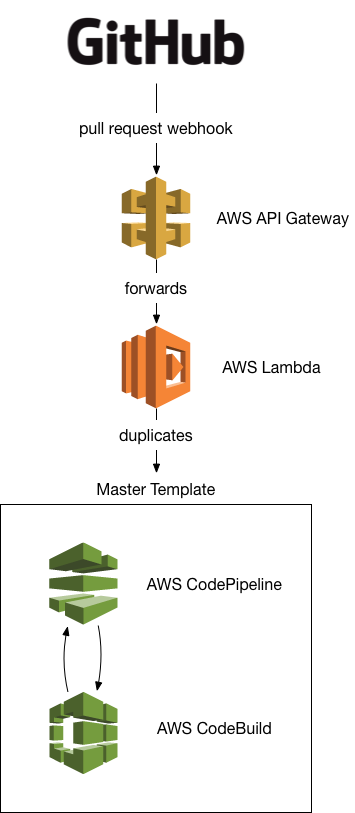

We use pull-requests and branching extensively, so one requirement is that we can dynamically pickup branches other than the master branch. AWS Codepipeline only works on a single branch out of the box, so I decided to use Githubs webhooks, AWS APIGateway and AWS Lambda to dynamically support multiple branches:

Architecture

First, you create a master AWS CodePipeline, which will serve as a template for all non-master branches.

Next, you setup an AWS APIGateway & an AWS Lambda function which can create and delete AWS CodePipelines based off of the master pipeline.

Lastly, you wire github webhooks to the AWS APIGateway, so that opening a pull request duplicates the master AWS CodePipeline, and closing the pull request deletes it again.

Details

AWS Lambda

For the AWS Lambda function I decided to use golang & eawsy, as the combination allows for extremely easy lambda function deployments.

The implementation is straight forward and relies on the AWS go sdk to interface with the AWS CodePipeline API.

One catch here is that the AWS IAM permissions need to be setup in a way to allow the lambda function to manage AWS CodePipelines:

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "AllowCodePipelineMgmt",

"Effect": "Allow",

"Action": [

"codepipeline:CreatePipeline",

"codepipeline:DeletePipeline",

"codepipeline:GetPipeline",

"codepipeline:GetPipelineState",

"codepipeline:ListPipelines",

"codepipeline:UpdatePipeline",

"iam:PassRole"

],

"Resource": [

"*"

]

}

]

}

AWS APIGateway

The APIGateway is managed via terraform, and it consists of a single API, where the root resource is wired up to handle webhooks. Github specific headers are transformed so they are accessible in the backend.

As Github will call this APIGateway we’ll need to set appropriate Access-Control-Allow-Origin headers, otherwise requests will fail:

resource "aws_api_gateway_integration_response" "webhook" {

rest_api_id = "${aws_api_gateway_rest_api.gh.id}"

resource_id = "${aws_api_gateway_rest_api.gh.root_resource_id}"

http_method = "${aws_api_gateway_integration.webhooks.http_method}"

status_code = "200"

response_templates {

"application/json" = "$input.path('$')"

}

response_parameters = {

"method.response.header.Content-Type" = "integration.response.header.Content-Type"

"method.response.header.Access-Control-Allow-Origin" = "'*'"

}

selection_pattern = ".*"

}

AWS CodePipeline

The AWS CodePipeline serving as template is configured to run on master.

This way all merged pull requests trigger tests on this pipeline, and every pull request itself runs on a separate AWS CodePipeline. This is great because every PR can be checked in parallel.

The current implementation forces all AWS CodePipelines to be the same - it would be interesting to adjust this approach e.g. by fetching the CodePipeline template from the repository to allow pull requests to change this as needed.

AWS CodeBuild

In my example the AWS CodeBuild configuration is static. However one could easily make this dynamic, e.g. by placing AWS CodeBuild configuration files inside the repository. This way the PRs could actually test different build configurations.

Outcome

The approach outlined above works very well. It is reasonable fast and technically brings 100% utilization with it. And it brings great extensibility options to the table: one could easily use this approach to spin up entire per pull-request environments, and tear them down dynamically.

In the future I’m looking forward to working more with this approach, and maybe also abstracting it further for increased reuseability.

The source is available on github.